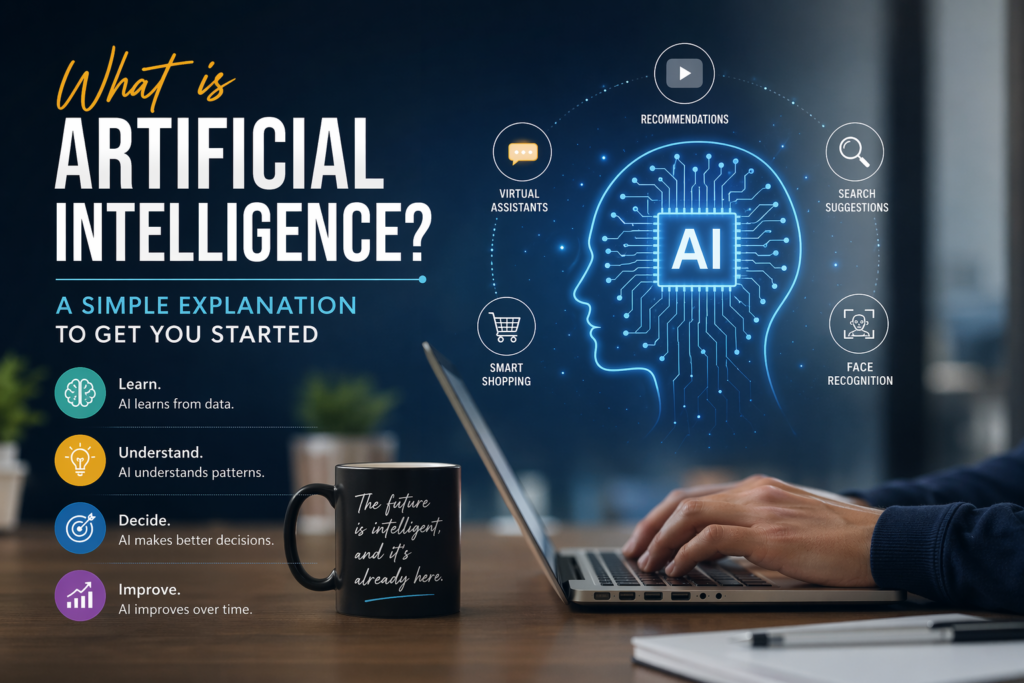

It feels like magic… but it’s actually math.

You type a question.

You get a smart answer in seconds.

It almost feels like ChatGPT understands you.

But here’s the truth:

👉 It doesn’t think.

👉 It doesn’t know facts the way humans do.

👉 It predicts.

Let’s break this down in the simplest way possible.

Step 1: Your Input Becomes Data

When you type something like:

“Explain cloud computing in simple terms”

ChatGPT doesn’t see it as a “question” like a human would.

Instead, your sentence is:

👉 Broken into smaller pieces called tokens

Example:

- “Explain”

- “cloud”

- “computing”

- “simple”

- “terms”

These tokens are converted into numbers so the model can process them.

Step 2: The Model Looks for Patterns

ChatGPT is trained on massive amounts of text data.

During training, it learns patterns like:

- Words that usually come together

- Sentence structures

- Context relationships

So when you type a prompt, the model is basically asking:

👉 “What word is most likely to come next?”

Not once.

But again and again, for every word in the response.

Step 3: Prediction Happens (The Core Idea)

This is the most important concept:

👉 ChatGPT predicts the next word based on probability.

For example:

“Cloud computing is…”

Possible next words:

- “a” ✅ (most likely)

- “the”

- “an”

Then:

“Cloud computing is a…”

Next prediction:

- “technology”

- “way”

- “model”

And this continues…

👉 Word by word

👉 Sentence by sentence

👉 Until a full response is generated

Step 4: Context Makes It Smart

If ChatGPT only predicted random words, it would sound nonsense.

But here’s what makes it powerful:

👉 It uses context

That means:

- It remembers your question

- It tracks conversation flow

- It aligns responses with your intent

So instead of random text, you get:

👉 Structured

👉 Relevant

👉 Human-like answers

Step 5: The Architecture Behind It (Simplified)

At the core, ChatGPT is built using something called a Transformer model.

You don’t need to go deep into math, but here’s the idea:

- It processes words in relation to each other

- It understands which words are important

- It gives attention to key parts of your input

This is powered by something called:

👉 Attention Mechanism

Which basically means:

👉 “Focus more on what matters”

Step 6: Response Generation in Real-Time

Once everything is processed:

- Predictions are made in milliseconds

- Words are generated sequentially

- The full answer appears almost instantly

All of this happens:

👉 In real-time

👉 In the cloud

👉 At massive scale

So… Does ChatGPT Really Understand?

Short answer:

👉 No (not like humans)

What it does is:

- Recognize patterns

- Predict language

- Simulate understanding

And it does this extremely well

Why This Matters for You

If you understand this, you unlock a big advantage.

Because now you know:

👉 ChatGPT responds based on how you ask

Which means:

- Better prompts → Better answers

- Clear input → Clear output

Simple Example

Instead of:

👉 “Tell me about AI”

Try:

👉 “Explain AI in simple terms with real-world examples”

You’ll instantly see the difference.

My Personal Advice

Don’t treat ChatGPT like Google.

Treat it like:

👉 A smart assistant that needs clear instructions

The more structured your input,

the better your output.

Key Takeaway

A simple way to think about it:

👉 ChatGPT = Prediction Engine + Context Understanding

It doesn’t “know”

It predicts what sounds right

And that’s what makes it powerful.

What to Learn Next

Now that you understand how ChatGPT works, continue your journey:

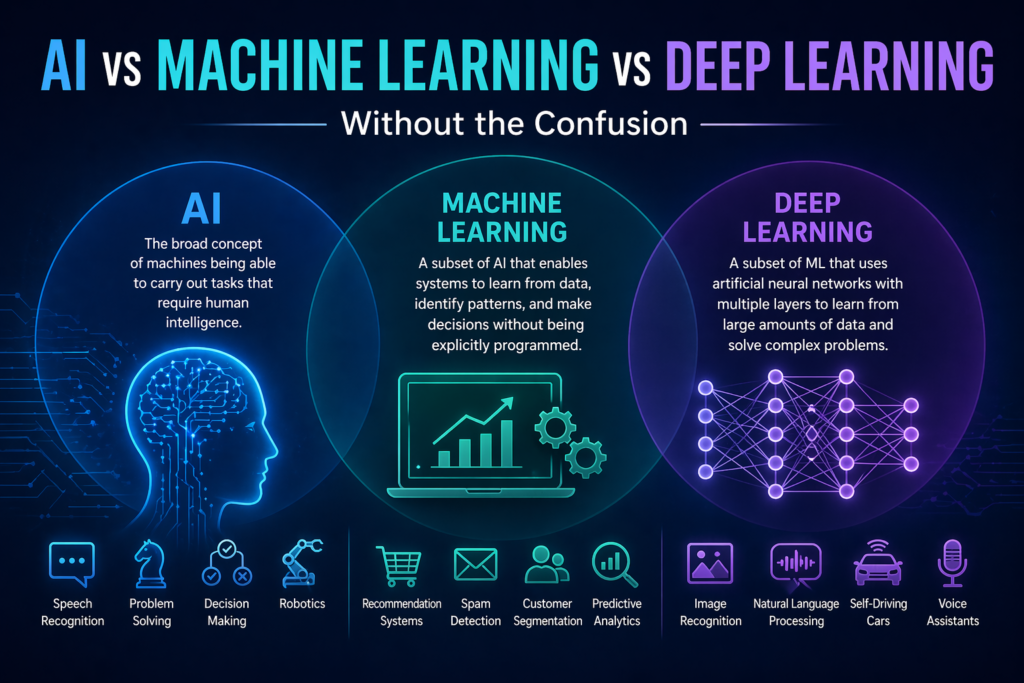

- AI vs Machine Learning vs Deep Learning

- Prompt Engineering (How to Talk to AI)

- Top AI Tools You Should Start Using

CareerFlow Academy Mission

Learn today. Apply tomorrow. Grow continuously.

You don’t need to learn everything at once.

Just understand one concept at a time.

👉 That’s how real learning happens.