If you’ve spent any time online lately, AI sounds like a digital sorcerer. It’s writing your emails, generating hyper-realistic art, and predicting the weather with eerie accuracy. It feels like there’s a “ghost in the machine” that’s actually thinking.

But I’m going to let you in on a secret: There is no magic.

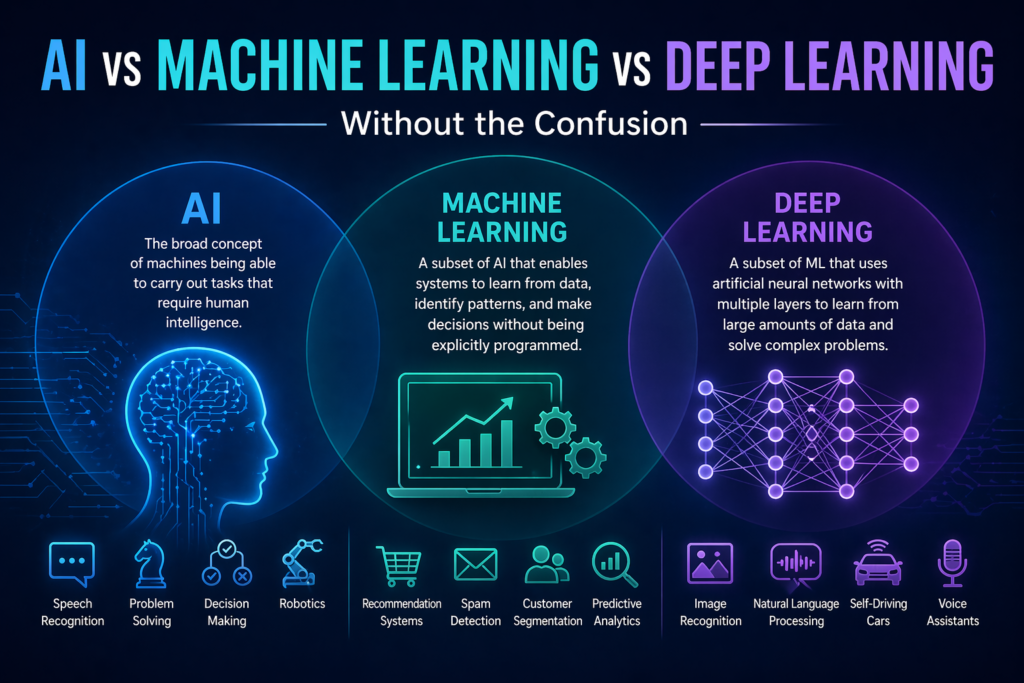

If you peel back the layers of marketing, you won’t find a brain. You’ll find a massive, incredibly fast calculator. At its core, AI is just High-Dimensional Pattern Recognition. Let’s break down what that actually means using things you already understand.

1. The “Connect-the-Dots” Problem

Imagine I give you a piece of paper with 100 random dots. Your brain instantly tries to find a shape—maybe it’s a circle, or a face. That is pattern recognition.

Now, imagine those dots aren’t just on a flat 2D page. Imagine they are floating in a 3D room. Still manageable, right? But AI operates in thousands of dimensions. Humans can’t visualize a 500-dimensional space, but computers don’t need to “see” it to find the patterns within it.

2. The “Neighborhood” Analogy

To an AI, a concept like a “cat” isn’t a fluffy animal with whiskers. Instead, the AI turns a cat into a long list of numbers, a “coordinate” on a map.

- Dimension 1: Is it small?

- Dimension 2: Does it have fur?

- Dimension 3: Is it a pet?

Now, imagine adding 10,000 more specific questions (dimensions). In this massive mathematical map, every “cat” data point will end up landing in the same “neighborhood.” “Dogs” will land in a nearby suburb, and “Toasters” will be in a completely different zip code.

When you show an AI a new photo, it doesn’t “think.” It just asks: “Where does this new point land on my map?” If it lands in the middle of Cat Town, it says “Cat.”

3. Scale: Why it Feels Like “Thinking”

The reason this feels like intelligence is simply the scale.

Modern models (like the ones we use in 2026) have billions of these “neighborhood” connections. When a model writes a sentence, it isn’t reflecting on the meaning of life. It’s calculating the statistical probability of which word comes next based on the “map” it built from reading the entire internet.

If I say: “The best thing since sliced…” Your brain predicts: “…bread.”

The AI does the same thing, but it does it for every possible word, every time, across a map more complex than we can imagine.

4. The Reality Check

Because AI is just a master of patterns, it has a major weakness: It doesn’t actually know what it’s saying.

- The Win: It can find patterns in medical data or global climate shifts that are too complex for humans to ever spot.

- The Fail: If you give it data that doesn’t fit its map, it won’t say “I don’t know.” It will confidently guess based on the closest pattern it has—leading to what we call hallucinations.

The Takeaway

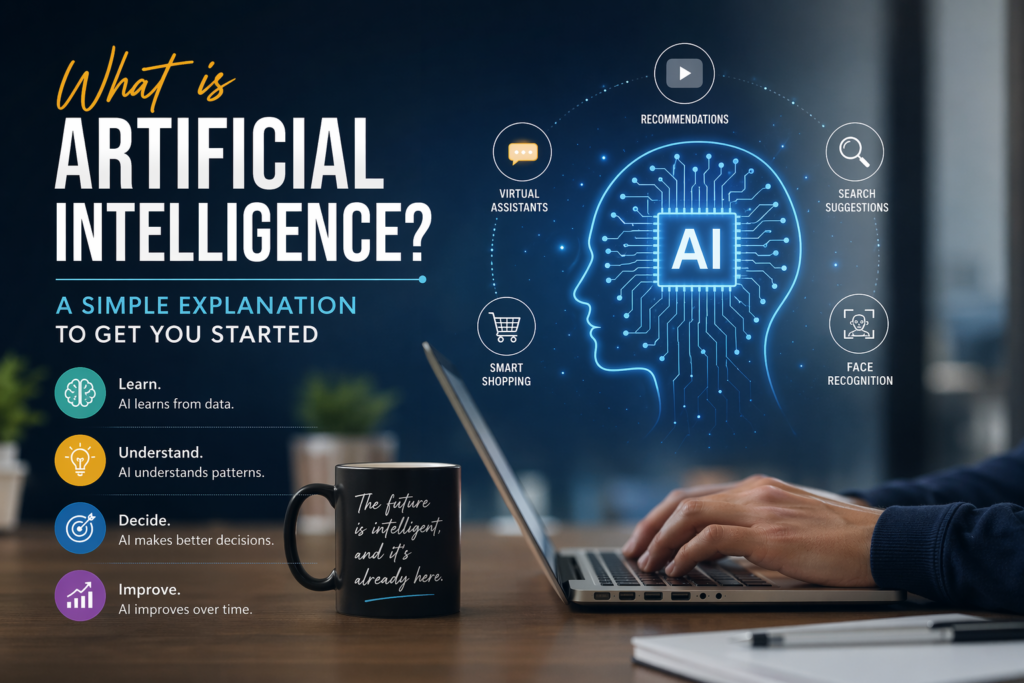

AI isn’t a digital person; it’s a high-speed pattern-matching engine. It takes the chaos of the world—images, text, sounds—and turns it into an organized map of numbers.

Once you realize AI is just a master of “mathematical neighborhoods,” the “magic” disappears, and you’re left with the most powerful tool ever built.